Robert G ZAMENHOF, PhD1. THE TRUTH BEHIND RED MERCURY 2. GLOBAL POSITIONING TECHNOLOGY: HISTORY & SCIENTIFIC PRINCIPLES 3. THE JOINT COMPREHENSIVE PLAN OF ACTION NUCLEAR AGREEMENT WITH IRAN: WHAT IRAN AND THE U.S. HAVE AGREED UPON & THE SCIENCE BEHIND IT (as of December 2015) 4. NATURAL RADIATION: WHERE DOES IT COME FROM & WHAT DOES IT DO TO US? 5. PRINCIPLES OF OPERATION & POTENTIAL HEALTH RISKS OF AIRPORT X-RAY SCANNERS 6. HOW DANGEROUS IS RADIATION TO HUMANS—OR IS IT? 7. COLD FUSION: A PROMISE OF GLOBAL SALVATION OR A HUGE SCIENTIFIC HOAX? 8. DEPLETED URANIUM: HEALTH RISKS IN MILITARY AND CIVILIAN USES 9. MODERN TECHNOLOGICAL DEVELOPMENTS IN RADIATION ONCOLOGY 10. DARK MATTER & DARK ENERGY: SERIOUS OR STAR TREK? 11. RADIOMETRIC DATING 12. DO CT SCANS REALLY KILL PATIENTS?  There are totally unsubstantiated reports that shortly before the Soviet Union's demise, the Soviet government decided to rid itself of all its supplies (if such supplies ever existed) of Red Mercury to protect the nation from terrorist elements that might utilize Red Mercury to manufacture ultra-compact ("pocket") fusion weapons. The Red Mercury was reputedly eliminated by packaging small quantities of it within small household appliances, such as toasters, sewing machines, etc., which were then exported out of the Soviet Union. The contorted logic of such a decision by a world power defies common sense; but not, apparently, for the ISIS buyers who were ordered to purchase such household items from the Soviets to gain ownership of Red Mercury hidden inside them, the "doomsday" material that could potentially change the face of terrorism throughout the world. This picture shows some Arab believers contemplating two sewing machines that ostensibly contain Red Mercury. Such Red Mercury containing appliances are still today being sold for hundreds of thousands of dollars to the dumb and ignorant on the international terrorism markets. Introduction

C.J. Chivers, in the New York Times, wrote an excellent, though scientifically lay-level introduction to the present interest by ISIS in obtaining supplies of the “doomsday material of dreams” called Red Mercury. I would like to copy and paste his excellent introduction to this topic before delving more deeply into the science. The hunt for the ultimate weapon began in January 2014, when Abu Omar, a smuggler who fills shopping lists for the Islamic State, met a jihadist commander in Tal Abyad, a Syrian town near the Turkish border. The Islamic State had raised its black flag over Tal Abyad several days before, and the commander, a former cigarette vendor known as Timsah, Arabic for ‘‘crocodile,’’ was the area’s new security chief. The Crocodile had an order to place, which he said he had received from his bosses in Mosul, a city in northwestern Iraq that the Islamic State would later overrun. Abu Omar, a Syrian whose wispy beard hinted at his jihadist sympathies, was young, wiry and adaptive. Since war erupted in Syria in 2011, he had taken many noms de guerre — including Abu Omar — and found a niche for himself as a freelance informant and trader for hire in the extremist underground. By the time he met the Crocodile, he said, he had become a valuable link in the Islamic State’s local supply chain. Working from Sanliurfa, a Turkish city north of the group’s operational hub in Raqqa, Syria, he purchased and delivered many of the common items the martial statelet required: flak jackets, walkie-talkies, mobile phones, medical instruments, satellite antennas, SIM cards and the like. Once, he said, he rounded up 1,500 silver rings with flat faces upon which the world’s most prominent terrorist organization could stamp its logo. Another time, a French jihadist hired him to find a Turkish domestic cat; Syrian cats, it seemed, were not the friendly sort. War materiel or fancy; business was business. The Islamic State had needs, it paid to have them met and moving goods across the border was not especially risky. The smugglers used the same well-established routes by which they had helped foreign fighters reach Syria for at least three years. Turkish border authorities did not have to be eluded, Abu Omar said. They had been co-opted. ‘‘It is easy,’’ he boasted. ‘‘We bought the soldiers.’’ This time, however, the Crocodile had an unusual request: The Islamic State, he said, was shopping for red mercury. Abu Omar knew what this meant. Red mercury — reputedly precious and rare, exceptionally dangerous and exorbitantly expensive, its properties unmatched by any compound known to science — was the stuff of doomsday daydreams. According to well-traveled tales of its potency, when detonated in combination with conventional high explosives, red mercury could create the city-flattening blast of a nuclear bomb. In another application, a famous nuclear scientist once suggested it could be used as a component in a neutron bomb small enough to fit in a sandwich-size paper bag. Abu Omar understood the implications. The Islamic State was seeking a weapon that could do more than strike fear in its enemies. It sought a weapon that could kill its enemies wholesale, instantly changing the character of the war. Imagine a mushroom cloud rising over the fronts of Syria and Iraq. Imagine the jihadists’ foes scattered and ruined, the caliphate expanding and secure. Imagine the price the Islamic State would pay. Abu Omar thought he might have a lead. He had a cousin in Syria who told him about red mercury that other jihadists had seized from a corrupt rebel group. Maybe he could arrange a sale. And so soon Abu Omar set out, off for the front lines outside Latakia, a Syrian government stronghold, in pursuit of the gullible man’s shortcut to a nuclear bomb. [C.J. Chivers, New York Times] The Science Behind Red Mercury's "Awesome Powers" There is nothing “magic” or “doomsday” about Red Mercury. The red-orange appearance of this material is simply due to its compounding with iodine as mercury-iodide. None of the isotopes of the element mercury are fissile. This means that they cannot be “split” by absorbing neutrons the way uranium-235 or plutonium-239 can. It is the splitting of a heavy isotope into two lighter isotopes that releases the extraordinary yield of energy that is the basis of the fission nuclear reaction, exemplified by the two nuclear bombs, Fat Man and Little Boy, dropped on Hiroshima in December 1945. Nor can Red Mercury have any role in nuclear fusion, since the process of nuclear fusion requires the nuclear coalescence under very high temperature and pressure of two very light isotopes, such as hydrogen, helium, deuterium, or tritium – which are about 100 times lighter than mercury. A brief explanation of the operating principle of thermonuclear (fusion) weapons is useful to explain the contorted pseudoscience behind Red Mercury. In the cold-war days, when the U.S. believed that there was a high likelihood that its thermonuclear weapons arsenal would in fact be used, the light isotopes that were used as the fusion fuel in its thermonuclear bombs and warheads were deuterium (hydrogen with an additional neutron) and tritium(hydrogen with two additional neutrons). A primary fission (uranium-235 or plutonium-239) stage of a thermonuclear weapon when triggered would create the enormously high temperatures and pressures required for the secondary stage of the weapon – the fusion stage – to be set off. Unfortunately, tritium is a radioisotope with a half-life of 12.5 years, so every 12 or so years the potential explosive power of the U.S. thermonuclear weapons arsenal was diminished by about 50%, and the weapons had to be recharged with fresh tritium. This was not a simple procedure considering the thousands of nuclear bombs and warheads spread out over the U.S. that required this attention, as well as the slow production rate of fresh tritium in the Savannah River nuclear reactor. Towards the end of the cold war, both the Soviet Union and the U.S. developed a solution to this problem: Instead of deploying deuterium and tritium as the fusion isotopes in thermonuclear bombs and warheads, the compound lithium-6-deuteride was used instead. Lithium-6 has the property of avidly absorbing neutrons generated by the primary fission stage of a thermonuclear weapon. After doing so, lithium-6 disintegrates producing the isotope tritium. Therefore, the supply of deuterium and tritium for the subsequent fusion reaction was made immediately available but only when needed at the moment the initial fission stage of the weapon was triggered. This was a big step forward in the design of thermonuclear weapons, and there is some credible evidence that in the Soviet Union’s defense establishment the code name for tritium-6-deuteride was Red Mercury. Pseudoscientific theories have abounded as to the utility of Red Mercury for weapons of mass destruction. One totally baseless theory asserts that with the catalytic presence of Red Mercury, the enrichment of natural uranium to weapons-grade uranium-235 can occur at a much faster rate than with the more conventional centrifuge technology. Another theory claims that if Red Mercury is subjected to very high pressures, it releases extraordinarily large amounts of heat, and could, therefore, replace the initial fission stage of a thermonuclear weapon by triggering the secondary fusion stage of the thermonuclear reaction. Yet another theory claims that if Red Mercury is irradiated for extensive periods of time in a nuclear reactor, radiation is absorbed (according to some unknown scientific principle) by the Red Mercury molecules. Then, if subjected to mechanical shockwaves, the absorbed energy in the Red Mercury is released and can either function as an independent weapon, or can replace the initial fission stage of a thermonuclear weapon, resulting in a physically much more compact and safe device prior to its detonation. However, there is absolutely no scientific support for Red Mercury having any of the properties described above. On a slightly humorous point, many centuries ago, mercury was the most common element used by alchemists to create "new" elements. Summary The only evidence of world powers being involved in any way with Red Mercury is that possibly toward the end of the cold war, the Soviet Union coined Red Mercury as a code word for lithium-6-deuteride, the fusion fuel for modern thermonuclear weapons. There is absolutely no scientific support to Red Mercury having any role either in the accelerated enrichment of uranium or in the construction of thermonuclear weapons. Consequently, Red Mercury is probably one of the more audacious international hoaxes perpetrated to date. ISIS, nevertheless, has believed this hoax and has attempted to scare the rest of the world into believing that they have developed, or are in the process of developing, a “doomsday weapon of mass destruction” based on the totally fabricated properties of Red Mercury. Perhaps the idea of Red Mercury and its ostensibly "awesome" properties is a modern version of alchemy! But in a sense the world should be grateful that due to ISIS’s continuing naïve belief in the Red Mercury hoax, it has squandered enormous amounts of its financial resources to buy up supplies of Red Mercury from international con men. Parenthetically, one wonders where ISIS has decided to dump all the toasters, sewing machines, and other domestic appliances after the Red Mercury (that supposedly was "hidden" inside such appliances) had been removed that it had spent millions of dollars purchasing. Well, see below...  U.S. GPS satellite, orbiting earth at a distance of 12,550 miles (5% of the distance to the moon). Solar panels provide power. Satellite also contains rocket motors to enable fine repositioning, and nuclear detonation detection "listening" devices so the U.S. military can immediately and precisely locate a nuclear detonation anywhere on earth; useful for test-ban treaty compliance.  Trajectories of the 31 active U.S. GPS satellites circling the earth, twice every 24 hours. At least 6 satellites are visible at any time from any position on the earth's surface. For example, at the head of the arrow 9 satellites are visible. Only 3 visible satellites are theoretically required to determine location, so the additional 6 visible satellites creates a strong redundancy and improved accuracy. Introduction

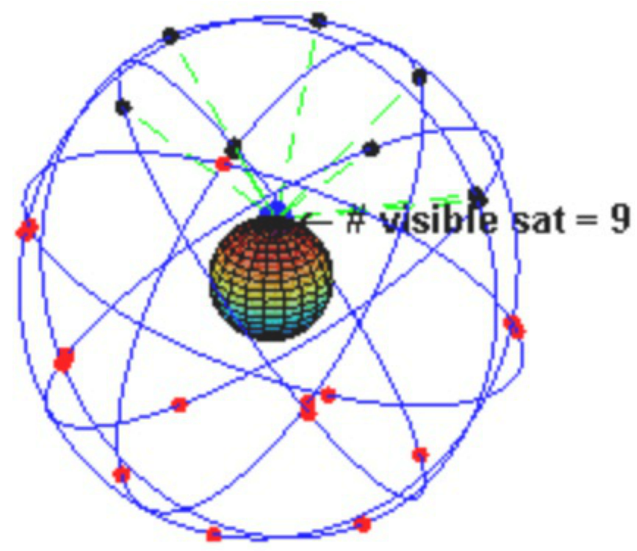

Global Positioning Systems (GPS) have been a familiar consumer technology for the past decade, but the ingenuity and great complexity of GPS is often belied by its intuitively simple principles. This article describes the principles of how GPS in the U.S. has evolved from the early OMEGA Navigation System in the 1970s, into the highly sophisticated 34-satellite GPS system launched in 1989. Current International GPS functionality is based on a combination of U.S. and Russian federation satellites, that results in a potential localization accuracy of approximately 6 meters (about 20 feet). For military applications such as missile guidance, far greater accuracy is obtainable. Although the most frequent use of GPS is in the consumer market, GPS technology, since 2009, has been in extensive use by the U.S. military. History In the 1970s, the Omega Navigation System developed by the U.S. became the progenitor of today’s GPS technology. Omega was based on receivers comparing the time-of-arrival of signal transmissions from pairs of ground-based transmitting stations and calculating the receiver’s (for example, an aircraft) position by the triangulation principle. Omega became the first worldwide radio navigation system; but, as its technology evolved into three-dimensions using space-based satellites, its access became restricted to military use. The first U.S. GPS satellite was launched in 1989, and the 24th–and last–in 1994. Initially, the civilian sector did have limited access to 3D GPS technology, but the signals available were intentionally degraded by the defense department to restrict the use of the fully accurate GPS system for weapons guidance and other military applications by unfriendly powers. In 1996, recognizing the importance of GPS for civilian air navigation, President Clinton issued a policy directive declaring GPS to be a “dual-use system” and established an Interagency GPS Executive Board to manage it as a national asset. With the removal of the intentional degradation of the GPS signals, the accuracy of civilian-accessible GPS improved from about 300 feet to about 60 feet. In 1998, with aviation civil navigation in mind, Vice President Gore announced plans to upgrade the GPS system with two additional civilian channels for enhanced accuracy and reliability, and in 2000 the U.S. Congress authorized and funded the effort. Current Status of GPS The upgraded U.S. GPS system, consisting of 24 orbiting satellites, is divided into six orbital planes with four satellites in each plane. An orbital plane is like a circular disk, where the edge of the disk defines the path of the satellite. The centers of all these orbital planes coincide with the center of the Earth (as shown in the 2nd picture above at a moment in time when 9 satellites are visible at the point on the earth's surface shown by the head of the arrow). The orbits were designed so that at least six satellites would always be visible within line-of-sight from anywhere on the earth’s surface. Since only three satellites are theoretically needed to establish a receiver’s three-dimensional location, this provided a lot of redundancy to compensate for transmissions blocked by building structures, malfunctioning satellites, etc. Orbiting at an altitude of 12,550 miles (about 5% of the distance from the earth to the moon), each satellite makes two complete orbits every 24 hours, covering the same projected track on the ground every day. From spherical geometry, this means that 4 satellites are visible from each location on the earth’s surface for a few hours a day. This makes positioning a much faster process. As of March 2008, there have been in fact 33 GPS satellites in orbit--31 active and two in retirement that are maintained as spares. In this new arrangement, about ten rather than six satellites are visible from any location on the ground at any moment in time, providing additional redundancy and increased accuracy of the GPS system. The flight-paths of GPS satellites are tracked by dedicated U.S. Air Force monitoring stations in Hawaii, Kwajalein, Ascension Island, Diego Garcia, Colorado Springs, Colorado, and Cape Canaveral, along with shared monitoring stations operated in England, Argentina, Ecuador, Bahrain, Australia and Washington DC. Each satellite is contacted at regular intervals with orbital and software updates using ground antennas. These updates synchronize atomic clocks on board the satellites and correct the satellites’ orbits when necessary. Atomic clocks, the most accurate timing technology in existence, provide the very high timing accuracy needed to synchronize the transmission of GPS radio signals from different satellites and to account for the so-called “Doppler shift,” a discrepancy in timing that occurs when a satellite transmits its signal while moving toward or away from the receiver (the Doppler shift is the familiar change in pitch of ambulance sirens as they move toward and away from a listener). Additionally, changes in atmospheric conditions and air humidity affect the velocity of the GPS signals as they pass through the earth’s atmosphere. Differences in receiver altitude introduce further discrepancies in timing due to the signals passing through less thickness of atmosphere at higher receiver elevations, but this effect is more relevant to aircraft navigation systems than to land-based navigation systems. GPS localization accuracy can also be affected when the radio signals reflect off surrounding buildings, canyon walls, hard ground, etc., due to reflective phase-shifts in the radio frequency signals. Finally, the GPS satellites’ atomic clocks also suffer errors due to Einstein’s general relativity principle. When different satellites viewed by a GPS receiver are moving relative to the GPS receiver at different speeds and/or experiencing different gravitational forces by virtue of different altitudes, their clock speeds will slightly vary. With all the above corrections carefully accounted for, the accuracy of civilian GPS systems today is about 6 meters (about 20 ft.), although for strictly military uses such as missile guidance, the accuracy is substantially improved. Inclusion of WiFi Transmissions in GPS Location More recently, in addition to receiving GPS signals from orbital satellites, some GPS receivers can also detect WiFi signals originating in their close vicinity. The locational origins of these WiFi transmissions are determined by referral to a publicly accessible WiFi database, and by the process of triangulation, the WiFi-determined location of the GPS receiver is found. This is a better system for positioning when the receiver happens to be indoors, in a tunnel, among very tall buildings, etc., since GPS signals experience degradation when passing through masonry or concrete. Russian Federation’s GLONAS GPS System In 1995, the Russian Federation’s satellite navigation system became operational. The system had the acronym GLONAS, which stands for Globalnaya Nvigatsionnaya Sputnikovaya Sistema. Loosely translated into English, this means Global Navigation Satellite System. GLONAS was initially operated by the Russian Federal Space Agency. As with the declassification of the USA’s GPS system, President Vladimir Putin in 2007 ordered all military restrictions to be removed from the GLONOS system so that it could be used by the civilian sector as well as by the military. At the present time, the accuracy of the Russian Federation’s GLONOS system is about 7-8 meters (20-25 feet), similar to the current fully unrestricted civilian accuracy of the US GPS system. Sweden’s SWEPOS GPS System In 2011, Sweden’s SWEPOS network of satellite reference stations, which provide data for real-time positioning, became the first known foreign company to use the Russian Federation’s GLONAS system. In Sweden, the accuracy of GLONOS as integrated to the SWEPOS system is about 1 meter (about 3 feet). An obvious question is how accurately do GLONAS and the U.S. GPS agree with each other. Interestingly enough they don’t! This is because as an absolute origin of its coordinate system, GLONOS uses the North Pole’s global position as measured in 1990 by satellite interferometry, while the U.S. uses the North Pole’s global position as measured in 1984. These two measurements differ by approximately 40 cm (1-1.5 feet). It is interesting to note that as part of its “Maps” application, Apple iPhones and iPads use both the U.S. GPS and the GLONAS satellites for location. Military Uses of GPS The list shown below shows the many applications of GPS by the U.S. military. Navigation: Soldiers use GPS to find objectives, even in the dark or in unfamiliar territory, and to coordinate troop and supply movement. Commander ranks use the Commanders Digital Assistant, while lower ranks use the Soldier Digital Assistant. Target tracking: Various weapons used by the U.S. military use GPS to track possible ground and air targets before flagging them as hostile. These weapon systems pass target coordinates to precision-guided munitions to allow them to engage targets accurately. Military aircraft, particularly in air-to-ground roles, also use GPS to locate targets. Missile and projectile guidance: GPS allows accurate targeting of various military weapons including ICBMs, cruise missiles, precision-guided munitions and artillery projectiles. Embedded GPS receivers, able to withstand accelerations of up to 12,000g have been developed for use in 155-millimeter howitzers. Reconnaissance: Patrol movement can be managed more closely using GPS. Nuclear detonation location: U.S. deployed GPS satellites each carry a set of nuclear detonation detectors, consisting of an optical sensor, an x-ray sensor, a dosimeter, and an electromagnetic pulse sensor, that form a major portion of the United States Nuclear Detonation Detection System. As mentioned earlier, the implementation of GPS technology by the U.S. military, although utilizing the same satellites as those used for civilian GPS, process the satellites' signals differently, resulting in about a factor of 10-50 times greater precision. Summary Global Positioning Systems have been a familiar consumer technology for the past decade or more. The U.S. GPS system evolved from the early OMEGA Navigation System of the 1970s into the highly sophisticated 34-satellite GPS system launched in 1989. Following the declassification of the U.S. and Russian Federation’s GPS systems by President Clinton and President Putin, and the increased technological collaboration between these two countries, the current International civilian GPS functionality results in potential localization accuracy of approximately 6 meters (about 20 feet). The civilian Swedish SWEPOS system of ground-based GPS receivers, integrated with the Russian Federation’s GLONAS system, produces an impressive localization accuracy of about 40 cm (or about 1-1.5 feet). Military uses of GPS have existed since 2009, and include smart-bomb, howitzer shell, and guided missile guidance, target tracking, and navigation by soldiers in unfamiliar territory or at night. The military GPS receivers process the GPS satellite signals differently than those used by civilians and are able to improve the accuracy of GPS navigation by factors of 10-50 times.  Gas centrifuge "farm" for separating uranium-238 from uranium-235. The vertical tubes are the centrifuges, having internal elements that spin very fast along the vertical axis. Uranium Hexafluoride gas (with natural uranium) is passed into the centrifuges. Uranium-235 and uranium-238 are then separated by virtue of the slightly different centripetal forces acting on the two slightly different nuclear masses. Uranium-238 becomes concentrated at a larger radius within the centrifuge than uranium-235. The two uranium isotopes are then separately extracted from the centrifuges. Posted on April 3, 2013 by Dr Simple Science

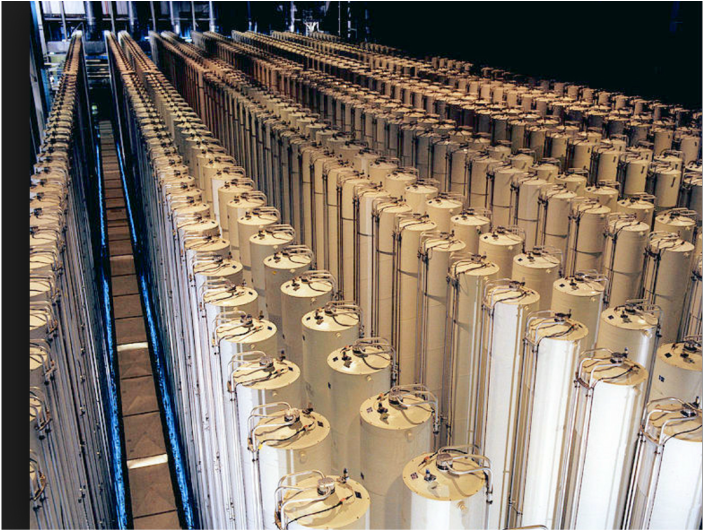

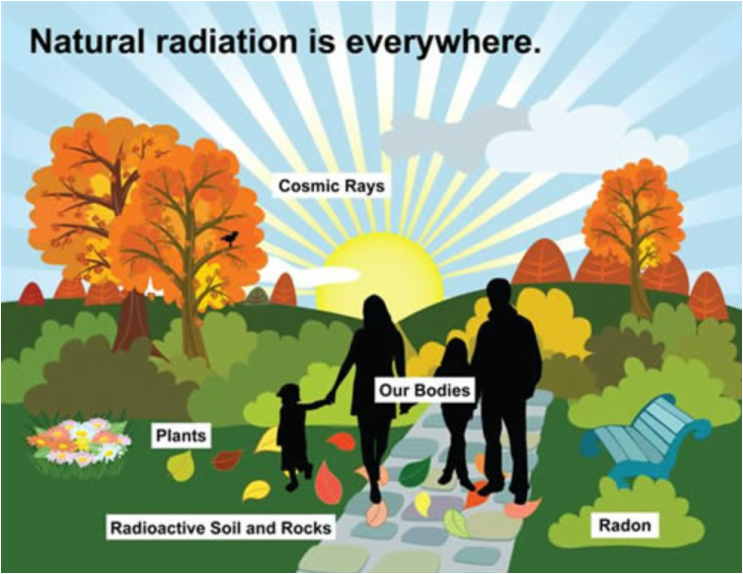

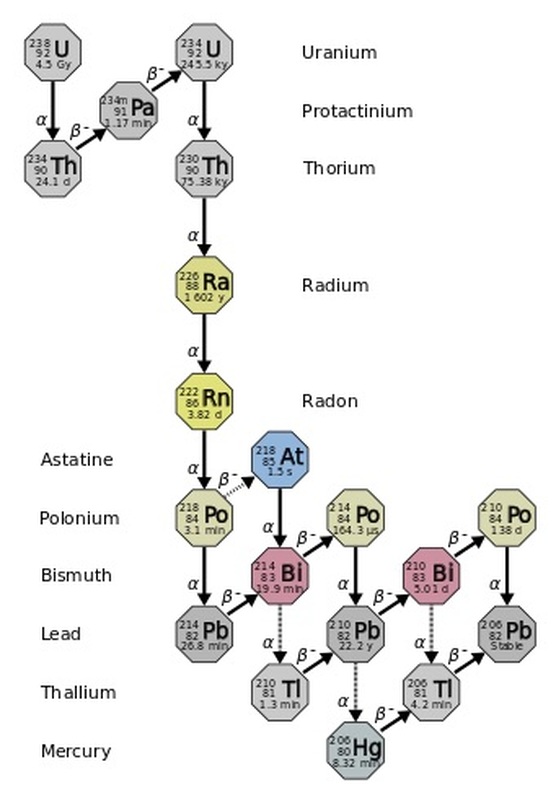

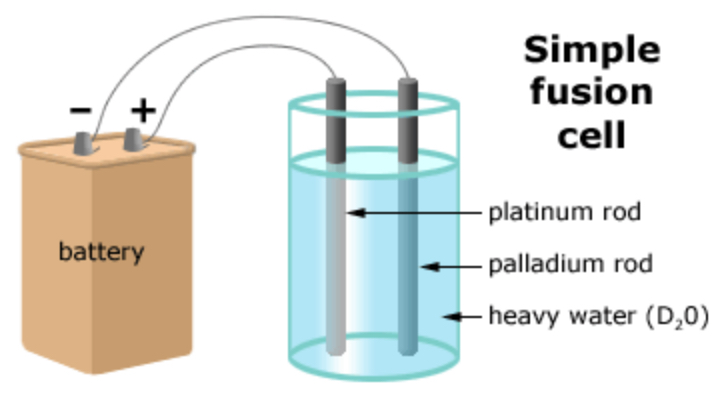

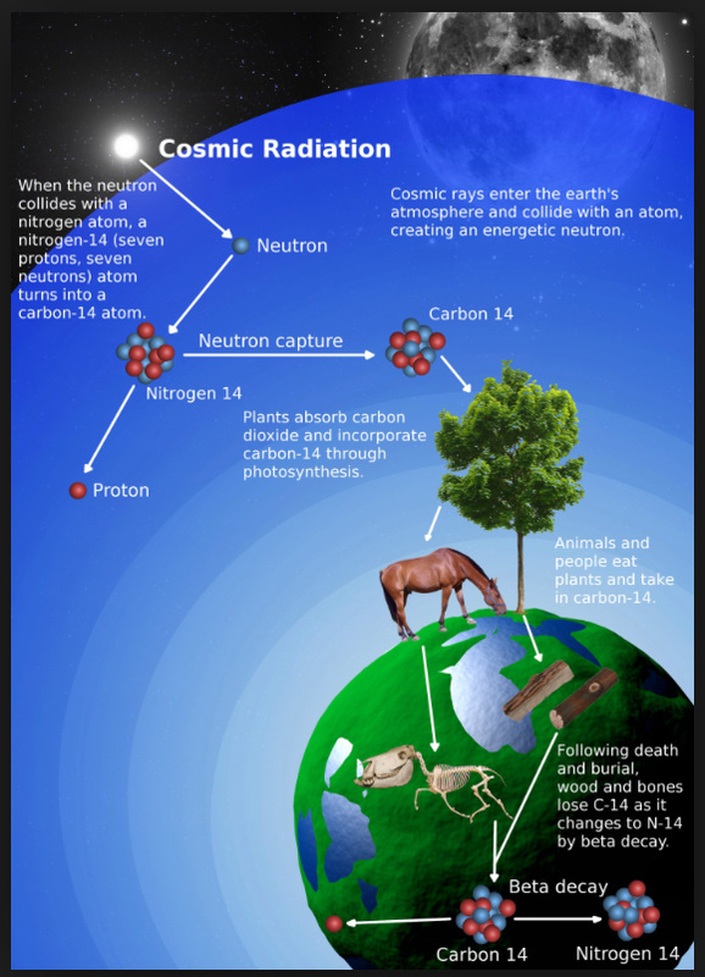

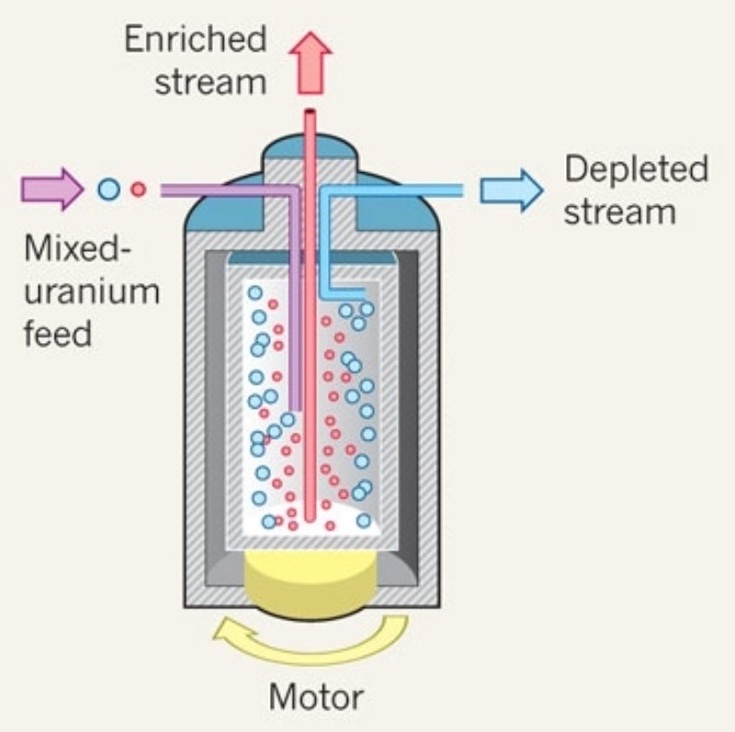

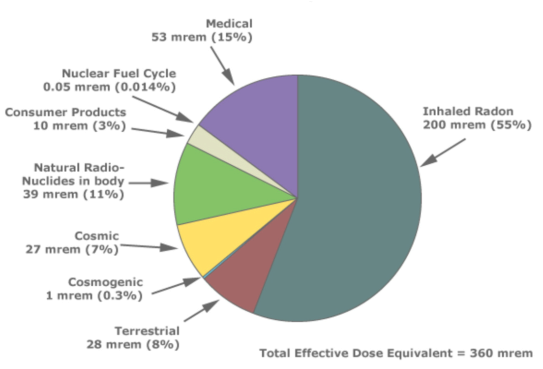

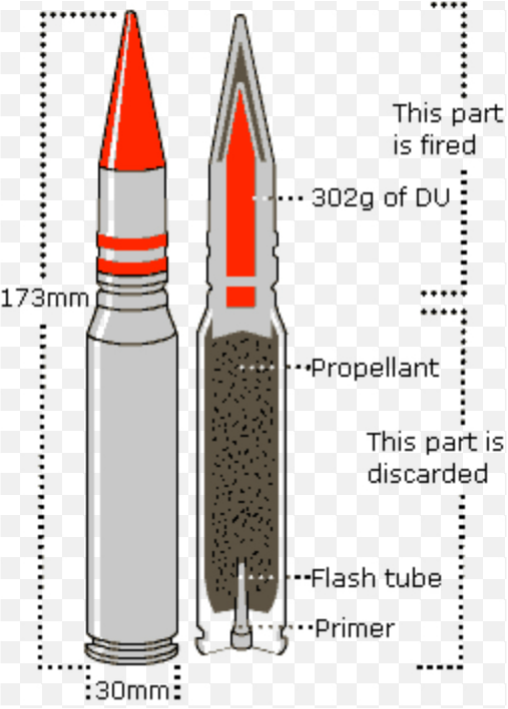

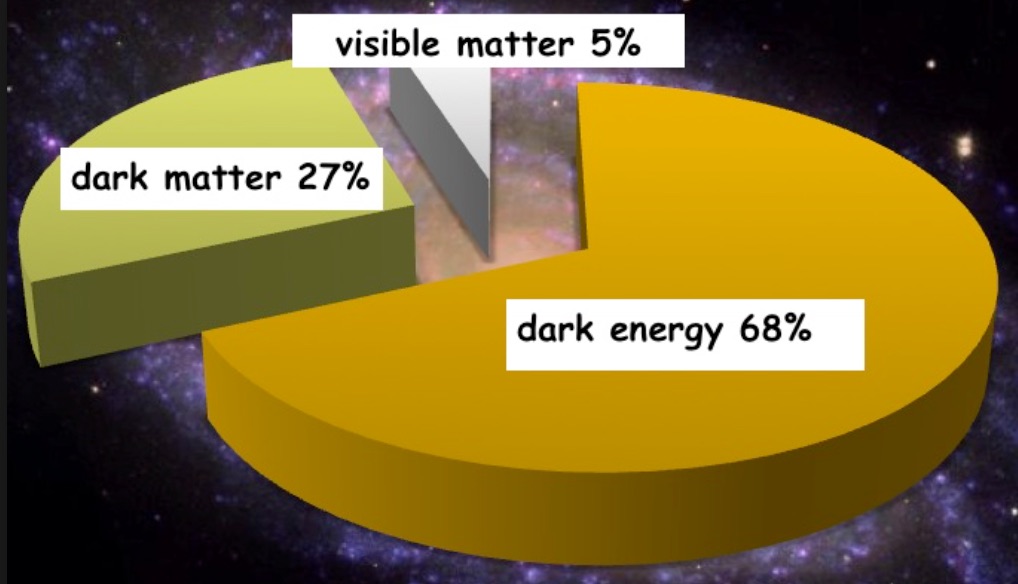

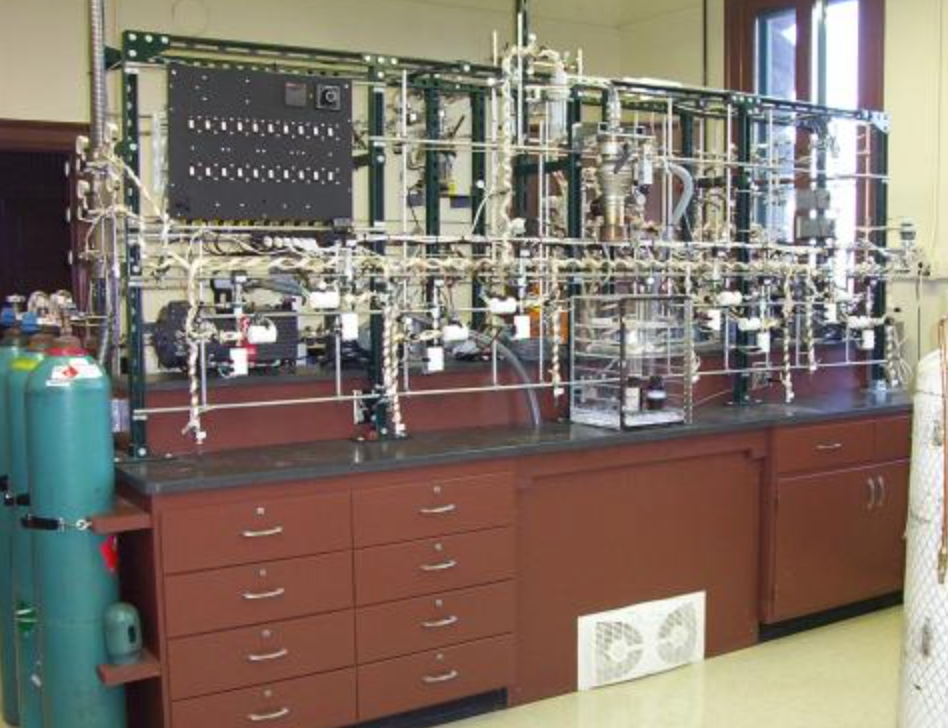

Introduction Iran has always claimed that it is enriching uranium as a necessary step toward providing various civilian services, such as radioisotopes for nuclear medicine, a civilian nuclear power program, and a civilian nuclear research program. However, this has clashed with widespread international belief that Iran’s claims are simply a cover for a much more nefarious goal, that of joining the Nuclear Club of nations possessing nuclear weapons–-without being invited, and, more specifically, for "wiping Israel off the face of the earth". The recently agreed upon Joint Comprehensive Plan of Action (JCPOA) between Iran and the U.S., together with five of the other principal nuclear nations, has caused a kerfuffle in the U.S. Congress and strong condemnation by Israel. Israel's Prime Minister, Bibi Netanyahu, is highly skeptical about the value of the JCPOA agreement and still insists that the U.N. “draw a red line” beyond which Iran’s nuclear development should not be tolerated by the international community and, if violated, might result in preemptive strikes by Israel against Iran's uranium enrichment and plutonium conversion facilities--as has already occurred on two previous occasions. Mr. Netanyahu has also warned that as early as this summer, despite the JCPOA agreement, Iran’s uranium enrichment and nuclear warhead fabrication facilities are expected to be moved to dispersed underground locations, making it far more difficult, if not impossible, to achieve successful verification, therefore rendering the JCPOA agreement largely ineffective. Since uranium enrichment by Iran and its progress towards nuclear weapon acquisition has produced a substantial amount of public fear in past years, I will try to clarify some of the scientific facts behind these issues. Summary of the JCPOA Agreement Between the U.S. and Allied Nations and Iran Iran's obligations * The primary uranium-235 enrichment site in Iran is Natanz (see map above). Under the JCPOA agreement, Natanz will be permitted to operate 5,060 uranium enrichment centrifuges. This is 25% of Iran's 20,000 currently operating centrifuges and will constitute older, much less efficient models that will be far slower in enriching uranium than the current ones. * Iran's uranium stockpile of uranium will be reduced by 98% to 300kg (660lbs) for 15 years, and will not be allowed to exceed an enrichment level of 3.67% (see below for further discussion of enrichment). * The Arak reactor (see map above), which according to its original design would have been a source of fissile plutonium-239 for manufacturing at least one nuclear weapon per year, will be transformed to produce far less plutonium than before and of a poorer quality. Fundamentally, this would limit plutonium-239 production and make it virtually impossible to fabricate any plutonium-239 based nuclear weapons. * All spent fuel from the Arak reactor that could potentially be reprocessed to recover plutonium-239 will be sent out of the country under a rigorous IAEA inspection protocol. In fact, Iran will ship out all spent fuel from all of its power and research reactors, preventing the accumulation of any spent fuel from which plutonium-239 could be extracted, and will not engage in any activity associated with the reprocessing of spent fuel to obtain plutonium-239, even for research purposes. U.S. and the 5 Allied Nations' Obligations * On the part of the U.S. and the five other allied nations in the JCPOA agreement, the commitment given to Iran in response to Iran's acceptance of the above conditions is to end the severe sanctions that were imposed on Iran in 2010. Quoting a memorandum from the U.S. State Department, "These sanctions were designed: (1) to block the transfer of weapons, components, technology, and dual-use items to Iran’s prohibited nuclear and missile programs; (2) to target select sectors of the Iranian economy relevant to its proliferation activities; and (3) to induce Iran to engage constructively, through discussions with the United States, China, France, Germany, the United Kingdom, and Russia in the “E3+3 process,” to fulfill its nonproliferation obligations". Specific Technology of Uranium and Plutonium Processing for Nuclear Reactor Fuel and Nuclear Weapons Manufacture Uranium mined from the ground contains various uranium isotopes, including uranium-235, the uranium isotope needed for the manufacture of fission nuclear weapons and nuclear reactor fuel. The "raw" uranium is first combined with hydrofluoric acid, which reacts with the uranium to produce uranium hexafluoride gas. The uranium hexafluoride gas is fed into centrifuges that spin the gas at extremely high speed (see the second picture above). By centripetal force, the heaviest uranium isotope, uranium-238, is forced to the outer wall of the centrifuge where it is extracted, while uranium-235 remains along the central axis of the centrifuge where it is separately extracted. However, this approach to uranium-235 separation is notoriously slow, so to offset this problem a very large number of very large advanced design centrifuges operating simultaneously is required (see third picture above). Limiting the number and efficiency of Iran's centrifuges is the first step in the JCPOA agreement in preventing Iran from enriching uranium to the level where fission nuclear weapons could be manufactured. The next step is limiting the enrichment level of Iran's entire stockpile of uranium to prevent its further enrichment to weapons grade uranium. The final step is to modify the cores of those nuclear reactors which are capable of rapidly producing high quality plutonium-239, which can also potentially be manufactured into fission nuclear weapons, and sending out of the country all spent reactor fuel rods to prevent Iran from extracting plutonium-239 from the burned fuel. Principles of Uranium Enrichment and Uranium-235 Fission Rates for Nuclear Reactors vs. Fission Weapons "Raw" or “natural” uranium, as it comes out of the ground, consists of about 0.7% uranium-235 and about 99% uranium-238 (there are a few unimportant additional isotopes of uranium present that account for the remaining 0.3%). Although the uranium-235 and uranium-238 uranium isotopes are chemically identical, uranium-235 is "fissile", whereas uranium-238 is not. Uranium-235 is the only naturally occurring fissile isotope that is suited for the manufacture of nuclear weapons and nuclear reactor fuel. A fissile isotope is one whose nucleus can be induced to break apart, or “fission”, following bombardment by nuclear particles called neutrons. In the case of uranium-235, the nucleus absorbs a neutron that makes it unstable and causes it to break apart. In doing so, it emits 2-3 outgoing neutrons, accompanied by a tremendous amount of energy, mainly as heat and light, but also as ionizing radiation (the kind of radiation that is potentially harmful to humans). Each of the 2-3 outgoing neutrons can now be considered as 2-3 incoming neutrons in the next uranium-235 nuclear interactions, that once again each produce 2-3 more uranium-253 fissions with the emission of 2-3 more outgoing neutrons and more energy. Therefore, the uranium-235 fissions-rate grows "exponentially" with time and, if not controlled, continues until all uranium-235 has been used up. Uranium-235 for Nuclear Reactor Fuel Since for each uranium-235 nucleus to fission requires one neutron to be absorbed—i.e., removed from the neutron population--each fission event increases the neutron population by 1-2 neutrons (2-3 neutrons produced in each fission, minus the one neutron that is absorbed to initiate each fission). If each fission event on average absorbed one neutron and emitted only one neutron that could initiate the next fission, the neutron population with time would remain roughly constant; this is the safe, “steady-state” condition under which nuclear reactors typically operate and produce energy. But to ensure that steady-state condition, one of the outgoing neutrons, on average, in each fission event must be "blocked" from causing further fissions. This blocking to some extent occurs naturally, due to the presence of uranium-238, which can absorb neutrons but does not undergo fission. But it is also achieved in a more controlled way using "control rods", which can be inserted into the uranium fuel to various depths. Control rods contain the isotope boron-10, which very strongly absorbs neutrons. Therefore, the fission rate in the uranium fuel is "tuned" by the precise positioning of the control rods in the core to maintain a safe and constant fission rate. If, on the other hand, the fission-rate were to increase exponentially in a nuclear reactor’s core, dangerous overheating could result, with the further possibility of more serious consequences such loss of core coolant followed by core meltdown. This is what happened in the Japanese Fukushima daiichi reactor accidents in 2011. Uranium-235 for Nuclear Fission Weapons In contrast, to manufacture uranium-235 fission weapons, we want the maximum amount of fission energy produced in the shortest possible time; which means we need to let the fission rate rapidly increase unchecked until all the uranium-235 has been used up. This means that following "triggering" of the fission process, the uranium-235 fission-rate would increase at a higher and higher rate, i.e., "exponentially". In a nuclear fission weapon, the lack of control rods is insufficient to ensure that the growth in fission-rate is as rapid as possible. But because, as already mentioned, natural uranium is mainly composed of neutron-absorbing--but non-fissile--uranium-238, potential problems are encountered in trying to utilize uranium fission for nuclear weapons. The large amount of uranium-238 significantly “eats up” the fission neutrons produced by the uranium-235, down-regulating the uranium-235 fission-rate just as control rods do in a nuclear reactor core. So the presence of uranium-238 is highly undesirable if the uranium is to be used for a nuclear weapon where the fission-rate must increase as rapidly as possible. Therefore, for the manufacture of nuclear weapons, the uranium-235 enrichment level is increased to at least 20%, but more commonly to 90% or higher, to minimize the deleterious (sometimes referred to as "poisoning") effect of uranium-238. [For similar reasons, although raw or natural uranium (with a uranium-235 fraction of about 0.7%) could, in principle, be used as a cheap and very safe fuel for nuclear reactors, much better fuel-to-energy conversion efficiency is obtained with slightly higher enrichment levels of 0.9-2%.] The Politics of Uranium Enrichment From the perspective of the recent JCPOA agreement, if Iran were committed to maintaining its 660 lb stockpile of uranium at an enrichment level of 20% or lower--as it was permitted to do in a previous deal proposed by Russia and initially accepted by Iran about 10 years ago--there is a theoretical possibility that Iran still could manufacture nuclear fission weapons; but more realistically, it could use its outdated 5,060 permitted centrifuges to moderately elevate the enrichment of the stockpiled uranium to bring it into a range where nuclear fission weapons could more easily be manufactured. To prevent this from possibly occurring, as part of the JCPOA agreement Iran's stockpile of uranium is not permitted to exceed an enrichment factor of 3.67%; from which, as already mentioned, it would be impossible to manufacture nuclear fission weapons. However, for the operation of nuclear reactors that can produce plutonium-239 from uranium-238 (plutonium-239 is an alternative fissile isotope suitable for nuclear fission weapon manufacture), a 3-4% uranium enrichment level is ideal, maximizing the efficiency and, therefore, the speed of uranium-to-plutonium conversion. That is why a component of the JCPOA agreement consists of disabling the ability of Iran's nuclear reactors from the production of plutonium-239. Iran’s uranium enrichment issue, together with the “hair trigger” of Israel’s decision of possibly preempting an Iranian nuclear attack on Israel by a destruction of all of Iran’s nuclear capabilities, is probably the most dangerous military crisis the U.S. has faced since the Cuban missile affair. We can only hope that the recent diplomatic progress between the U.S. and Iran will lead to a nuclear stand-down between Iran and the Western powers and Israel to give the world another decade or two of nuclear peace. Summary Uranium-235 can be enriched to higher percentages that the 0.7% level in naturally occurring uranium. Up to about 20% enrichment, the uranium can only be used as fuel for nuclear fission reactors, but at enrichments levels greater than 20%, the possibility exists that the uranium can be used to manufacture nuclear fission weapons. Much lower uranium enrichment levels of 3-4%--permitted under the current JCPOA agreement--though precluding the direct manufacture of nuclear fission weapons, if used as nuclear reactor fuel could, in principle, lead to the rapid production of plutonium-239, a fissile isotope of plutonium from which nuclear fission weapons can be manufactured as they can from uranium-235. Under the JCPOA agreement "Export" of used nuclear fuel rods out of Iran should, in principle, prevent this from happening. The concepts of 1) a steady-state fission-rate, maintained through the use of control rods in nuclear reactors, and 2) an exponentially increasing fission-rate, desirable in nuclear fission weapons, are both determined by the fate of the additional neutron produced with each uranium-235 fission, over and above the one outgoing one neutron that is necessary in a steady-state fission rate. With that "extra" neutron absorbed either by control rods or by the uranium-238 present in the uranium, a steady-state fission-rate is achieved, which is the operation mode of nuclear reactors. However, if that extra neutron is not removed and becomes available to cause further uranium-235 fissions, an exponentially increasing fission-rate results results which, if allowed to proceed unhindered, results in a nuclear fission explosion. The 3.67% enrichment level of Iran's stockpile of uranium-235, permitted under the JCPOA agreement, although too low to enable nuclear fission weapon manufacturer (as well as being precluded from being further enriched by the restrictions on the number and type of the centrifuges Iran is permitted to retain) can, nevertheless, be manufactured into very efficient nuclear reactor fuel. Such efficiently fueled nuclear reactors can potentially rapidly produce plutonium-239, from which nuclear fission weapons can also be manufactured. However, one component of the JCPOA agreement consists of disabling the nuclear reactors that are capable of the rapid production of high-quality plutonium-239, and in addition requiring the export of all burnt up fuel to prevent the covert extracting of plutonium-239 from the uranium fuel residue. Many countries, in particular Israel, consider the JCPOA agreement much too favorable to Iran, with too many loopholes permitting Iran's continued development of nuclear weapons; but, as president Obama has stated, "It was either this deal or no deal". The world can only hope and pray (if that is our disposition) that this agreement will be honored by both sides, which would greatly alleviate the international nuclear tensions that presently exist.  Sources of natural (background) radiation are cosmic rays from space, gamma rays from radioactive soil and rocks, alpha particles produced by radon gas, and our own radioactivity within our bodies. Background radiation depends tremendously on geography, altitude, and habitat architecture. The biological effects of radiation are so small that at such low levels of background radiation, no detrimental effects in humans have ever been observed.  Radioactive decay chain of natural radioisotopes within the earth. Starting with uranium-238, with a half-life of 4.5 giga-years (4500,000,000 years-about the same as the age of the earth itself), which has been present since the earth was formed. The decay chain passes through radium-226 and radon-222 (shown in yellow), which the two radioisotopes now responsible for terrestrial background radiation and radon gas exposure. Introduction The world of radiation, radioactivity, and radiation risks is pretty complex and is generally left to the domain of radiological physicists (such as myself) to explain the intricacies of this field; but unfortunately, radiation topics are also frequently discussed in the press and elsewhere by those who do not understand the science, leading to a lot of misunderstandings. We will attempt to rectify this situation! In this article we will talk about the origins of various components of "natural" radiation--sometimes referred to as "background" radiation. Natural radiation is composed of different kinds of radiation: cosmic radiation from space, influenced by our elevation and our proximity to polar regions, natural radiation from the ground, resulting from the decay products of primordial radioisotopes that were formed during the big-bang, a radioactive gas that oozes out of the earth and building materials and which irradiates the insides of our lungs when we breath it, and naturally occurring radioisotopes trapped in our own bodies. The last of these includes two radioisotopes, created by normal nuclear reactions in our atmosphere, which are incorporated into every organ and tissue in our bodies and irradiate us from within. We will also talk about the relative risks of natural radiation vs. other common everyday activities, such as drinking wine, flying, and just living in New York City. And finally, we will talk about consumer products that contribute a very small fraction of natural radiation; with the exception of one product--radioactivity from cigarettes--which can contribute radiation to the linings of our lungs at a level about four times that of the remaining natural radiation! What kinds of radiation are we talking about? During everyday life we are exposed to a variety of different types of “natural” radiations; that is in contrast to our “obligatory” exposure to man-made radiations, such as radioactive fallout from nuclear weapons testing (which largely ended in the 1960s) and exposure to medical sources of radiation, such as diagnostic radiology, nuclear medicine, and radiotherapy. Sources of natural radiation Terrestrial radiation Terrestrial radiation emanates from inside the earth--literally under our feet--as well as from soil, sand, and stone-based building materials that surround us. It consists of primarily two types of radiation: gamma rays and alpha particles. Gamma rays mostly originate from primordial uranium and thorium (ultra heavy radioactive elements) that were originally created and trapped in our planet at the time of the big-bang. Subsequently, the uranium and thorium have decayed into many other radioactive elements, but in terms of the sources of terrestrial radiation, the main ones are radium-226 and radon-222, that radium-226 decays into (see the second picture above, which shows the "decay chain" of uranium-238 into non-radioactive lead-206; the chain passes through radium-226 and radon-222). It is the subsequent radioactive decay of radium-226 in the soil and its derivative building materials that exposes us to gamma rays. Radon-222 is a gas that oozes (good scientific term!) out of anywhere that radium-226 is present, that we are then forced to breath and that exposes us internally to its decay radiation, which consists of alpha particles. Because radon-222 constitutes such a major percentage of our annual natural exposure (55%), we will spend some additional time talking about it and treat it separately from radium-226. Radon gas inhalation Radon-222 is formed as one intermediate step in the normal radioactive decay chains through which the primordial isotopes thorium and uranium slowly decay into lead. The picture at the top of this article of one of the primordial uranium decay chains shows where along the decay chain radium-226 and radon-222 are situated. Radon-222 is particularly hazardous as a component of natural radiation because it decays by emitting alpha particles. Radiation dose delivered by alpha particles is deemed to be 10 times more harmful than radiation dose delivered by x-rays or gamma rays. The reason for this is explained below. Whereas x-rays and gamma rays are termed “sparsely ionizing” radiation, alpha particles are termed “densely ionizing” radiation. X-rays and gamma rays mostly cause DNA single-strand breaks, which are randomly distributed along the DNA molecule and separated by quite long distances, relatively speaking. Since the DNA molecule is composed of two tightly bound spiral strands, it is unlikely that single-strand breaks will occur exactly opposite each other, thereby causing the DNA to break apart. Individual single-strand breaks can be quite easily repaired by specialized enzymes within time periods of about 2-4 hours. In contrast, alpha particles also cause single-strand breaks in the DNA, but at much shorter intervals, so it is therefore much more likely that two such single-strand breaks occur opposite each other, causing the DNA molecule to break apart. These are called “double-strand breaks” and are much more difficult, if not impossible, to repair. In addition to this, alpha particles have a very much shorter penetration depth in tissue than x-rays or gamma rays (in fact about 1,000 times shorter). Therefore, all the energy of an alpha particle is delivered to only a few layers of cells, whereas the same energy delivered by x-rays or gamma-rays is diluted over a much larger volume of many millions of cells. A useful analogy is a watering hose used to water flowers. When the nozzle is set to “jet”, the water applies much more pressure as it hits the flowers than when it is set to “spray”—even though in both cases the volume of water being delivered (equivalent to the energies of the x-rays, gamma rays, and alpha particles) may be the same. This occurs because the water is concentrated into a small area in the case of the “jet” and a large area in the case of the “spray”. These two differences between sparsely and densely ionizing radiations accounts for most of the increased damage for the same dose that alpha particles cause to tissues and organs relative to x-rays and gamma rays. The factor used to account for this difference in damage is called the quality factor of the radiation. X-rays and gamma rays are defined as having a quality factor of 1. Relative to this, alpha particles are defined as having a quality factor of 10. That is why radon gas is especially hazardous when inhaled. When the surfaces of our body are exposed to radon gas, there is no risk, because the range of the alpha particles is so short that it cannot even penetrate the surface layers of our skin. Numerous studies have shown an association between radon gas exposure and lung cancer, which is why radon gas is of such concern as a component of natural radiation. The pie-chart in fig. 1. shows that radon gas constitutes, on average, about 55% of the entire natural annual effective dose we receive. Effective dose, explained a little farther on, incorporates the quality factor of the radiation. As in the case of gamma ray exposure from radium, which varies greatly depending on the local geological conditions, exposure to alpha particles from inhaled radon gas varies correspondingly with how much radium is present in the soil and building materials, and also on how readily radon gas can diffuse into buildings and how effectively ventilation can remove it. Typically, houses with basements are a much greater source of radon gas than houses without basements. Houses built of brick are a greater sources of radon gas than houses built of wood. And more modern “energy efficient” houses, with very insulating and weather-tight windows, are also a greater source of radon gas. Cosmic radiation Cosmic radiation originates in our sun and partially penetrates our atmosphere, exposing us mainly to gamma rays and charged particles, largely protons. Cosmic radiation varies greatly depending on elevation; the higher the elevation we live at, the higher is the cosmic radiation component because there is less atmosphere to absorb the cosmic rays before they reach us. Pilots and cabin crew receive substantially more cosmic radiation exposure than the rest of us due to the hundreds of hours a year they spend at very high altitudes. In fact, among so-called radiation workers, pilots and cabin crew of long-haul aircraft receive close to the highest occupational radiation doses of any profession. The highest occupational doses are actually received by interventional radiologists working with specialized x-ray machines in hospitals. As already mentioned, natural radiation varies depending on geographical location and elevation. For example, if we combine annual natural terrestrial and cosmic radiation doses, we find 48 mrem in New York City, 140 mrem in Denver, 300 mrem in Kerala (India), and 500 mrem in parts of the Brazilian coast. Intuitively, one would expect to observe elevated cancer rates, for example, for the populations in Kerala vs. New York City. In fact, such an association is generally not observed, leading to the supposition that for protracted, low-dose radiation exposures, cancer induction may not be elevated, but may in fact be decreased. Such an inverse relationship between protracted low-dose radiation exposure and cancer induction is also observed in other situations. Neighboring provinces in Mainland China that are culturally identical, but due to geological factors have natural radiation levels differing by almost a factor of three are observed to have a higher cancer rate in the province with the lower natural radiation levels. In the U.K., radiation workers experienced a significantly reduced cancer rate compared to workers in other industries after statistically confounding factors had been accounted for. Data such as that point to the possible existence of radiation hormesis, which is the phenomenon when increased radiation causes a reduction in observed cancer rates. Radiation hormesis is a topic in radiation biology that has gained some traction after “coming out of the scientific closet” about 15 years or so ago. Although the mechanisms that result in radiation hormesis are still not entirely understood, they are gradually being elucidated. Internal radiation Internal radiation from naturally occurring radioisotopes originates from inside our bodies and is due mainly to naturally occurring radioactive potassium-40 and carbon-13. Potassium and carbon constitute, respectively, important components of intracellular composition and a large fraction of virtually every tissue and chemical structure in our body. The potassium-40 exposes our bodies to gamma rays, while the carbon-13 exposes our bodies to electrons, often called beta rays. Potassium-40 and carbon-13 are a component of natural radiation that is totally unavoidable. An interesting byline: On average, men have about 20% more muscle mass than women, so their exposure from their own internal radiation is roughly 20% higher than that of women. Furthermore, since the potassium-40 decays in our bodies producing gamma rays, which are quite penetrating and in fact to some degree escape from our bodies, if we routinely sleep with a partner our natural radiation exposure from potassium-40 is a few percent higher than if we remain celibate, because the escaping gamma rays from the internal potassium-40 in one partner will slightly increase the radiation doses that the other partner receives. Medical radiation Medical Radiation exposes our bodies to radiation, mainly due to diagnostic x-rays and nuclear medicine procedures. Medical radiation doses dropped significantly in the 1940s with the introduction of screen-film technology, but have gradually risen again due to the increasing dependence on and utilization of high technology x-ray diagnostic systems, in particular CT scanners. Obviously there are some individuals who do not get x-ray exams, while others may get many, so the medical component of natural radiation actually varies tremendously and can only be considered in average terms. Another article in my Dr. Simple Science blog, titled “Do CT scans kill patients?” goes into this topic a little more deeply. Summary of natural radiation sources The pie-chart in fig. 1. below summarizes the sources of natural radiations (including medical radiation) that we are exposed to, showing an average breakdown by annual effective dose and by percentage. The pie chart also shows the contribution of natural radiation from “consumer products”, which will be discussed in more detail later on. Fig. 1. Pie chart of annual natural and medical effective doses received by the average individual in the U.S. The doses shown in the pie-chart in fig. 1. are expressed as average “effective doses” . The concept of effective dose will be explained shortly.

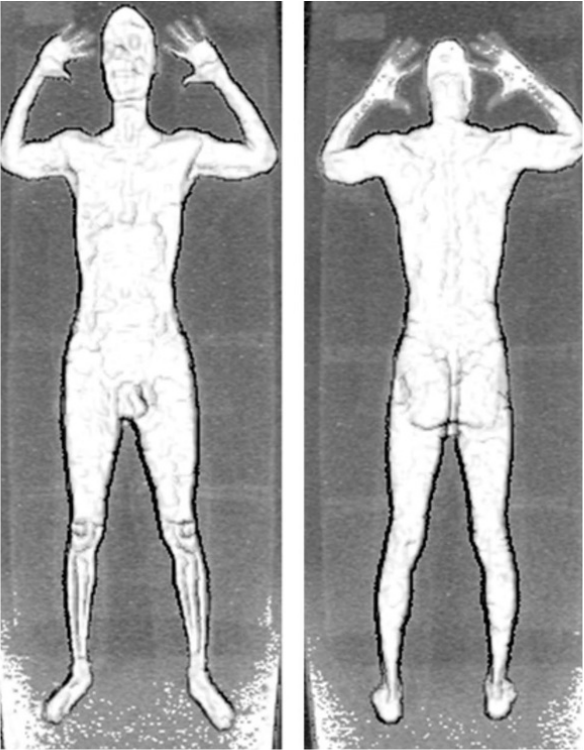

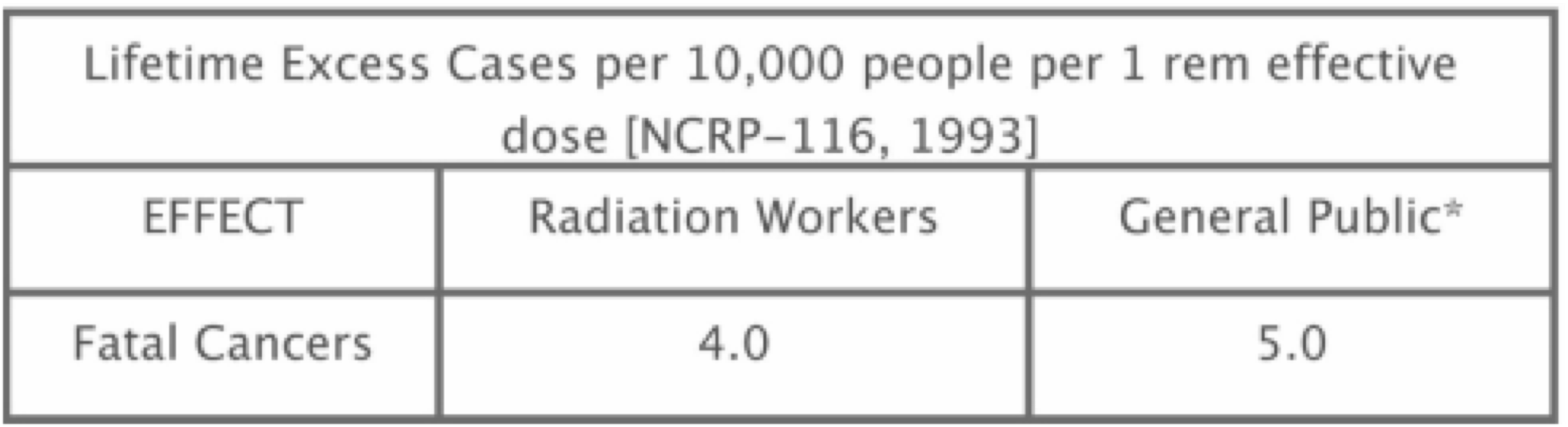

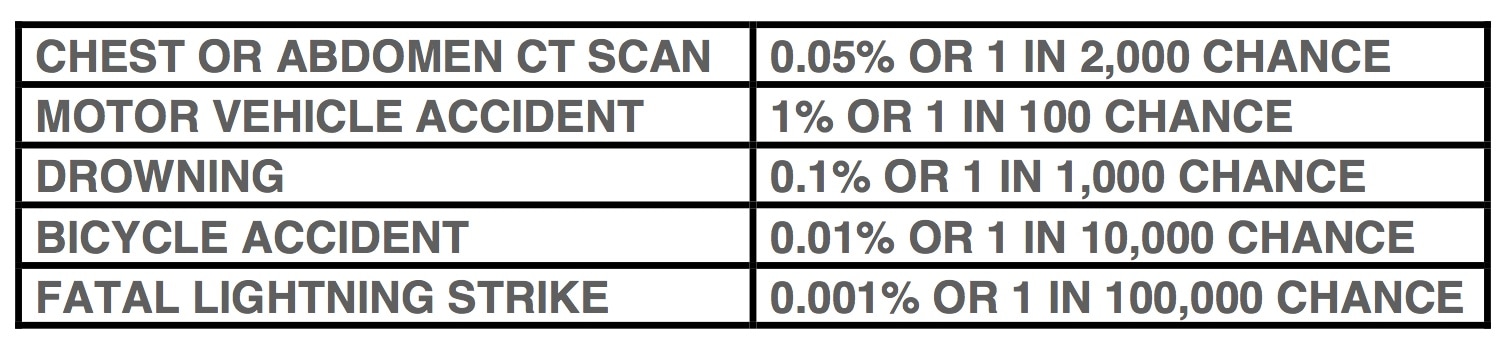

To summarize, natural radiation is composed of five main categories, as listed in table 2 below: Table 2. The 5 main categories contributing to total annual natural radiation exposure in units of annual mrem (divide by 100 to convert to mSieverts) of "effective dose" CATEGORY ANNUAL EFFECTIVE DOSE (mrem) PERCENT OF EFFECTIVE DOSE TERRESTIAL 28 8% COSMIC 27 7% INTERNAL 39 11% MEDICAL 53 15% INHALED RADON GAS 200 55% REMAINDER 11 3% TOTAL: 360 mrem (3.6 mSv)/year But what is effective dose? – permit me a slight digression into physics geek-dome (in blue text). The concept of effective dose is used extensively when assessing the risks of radiation exposures to individuals and populations. As an example of how the effective dose concept is useful in the case of individuals, assume you have a chest x-ray. The x-rays will expose many organs in your body to varying doses: skin, lungs, heart, bone, bone marrow, etc. Furthermore, these exposed organs in your body will have different sensitivities for developing cancer from those x-ray doses (for example, the same radiation dose delivered to your arm as opposed to your bone marrow would be far less likely to produce a radiation-induced cancer). These varying sensitivities to radiation are expressed mathematically by the concept of tissue weighting factors in the effective dose calculation method. Similarly, if the same radiation dose were delivered to only half of the bone marrow, it is assumed it would be 50% less likely to cause a radiation-induced cancer than if that same radiation dose were delivered to the whole bone marrow. Finally, the type of radiation is included in the effective dose calculation as a quality factor. X-rays and gamma-rays are defined as having a quality factor of 1. Other radiations, such as neutrons or alpha particles, have quality factors higher than one, reflecting the greater amount of biological damage they produce for the same dose. So to calculate effective dose, we determine, 1) which organs receive what doses of radiation; 2) what fractions of each of these organs is exposed; 3) the sensitivity each exposed organ has for developing radiation-induced cancer, i.e., the corresponding published tissue weighting factors; and 4) the quality factor of the radiation. We then sum up all the weighted doses and the result gives us the total effective dose. The beauty of the effective dose approach is that no matter how a particular radiation exposes an individual, i.e., from which direction, and with what width beam and shape, etc., the effective dose calculation takes into account all of these factors. The total effective dose can then be plugged into an effective dose vs. cancer risk model (such as the commonly used linear-no-threshold (LNT) model) to yield the final probability that an individual will develop a radiation-induced cancer from that diagnostic exam. For the chest x-ray we postulated in this example, the total effective dose would numerically be approximately 5 times lower than the actual skin dose delivered within the boundaries of the x-ray beam. Correspondingly, the risk for an individual developing cancer from this chest x-ray would theoretically be 5 times lower than if the old-fashioned skin dose assessment were employed. The simple skin dose approach was utilized for assessing radiation risks from diagnostic x-ray procedures until about 15 years ago, but proved notoriously inaccurate in predicting cancer risk. Here is an example of how misunderstood effective dose is by those who really should understand it. The TSA at U.S. airports is in charge of the operation of threat detection devices such as x-ray backscatter body scanners. Following a request by a congressional committee, the TSA recently released the effective doses delivered to subjects undergoing x-ray backscatter body scans. However, following this disclosure the TSA was vehemently criticized by certain (lay) watchdog groups because, they claimed, the TSA when stating the doses delivered by these devices only gave the effective dose, whereas the dose to the subject’s skin was far higher. This is where the misunderstanding arises; yes, the dose to the skin is indeed be far higher numerically than the effective dose, but what was not understood by the watchdog groups, or the congressional committee, was that the higher skin dose was completely accounted for in the formalism for calculating effective dose, as we demonstrated in the example above of an individual receiving a chest x-ray. The TSA was completely correct in reporting the cancer risk based on the effective dose and not on the skin dose. How are data needed to perform effective dose calculations obtained? In order to calculate effective dose, we first have to use a theoretical calculation method called Monte Carlo Simulation. This calculation gives us the distribution of the dose from a specific radiation exposure of the subject on an organ-by-organ basis. The calculation uses realistic mathematical human models of varying sizes and shapes. The Monte Carlo calculation provides the values of all full and partial organ doses, from which the effective dose can be calculated as described above. We have reviewed the kinds of natural radiations that every one of us is exposed to whether they want to or not. But what is the absolute cancer risk that the effective doses we calculate—say, for a chest x-ray—actually produce? The explanation of how we take this next step is beyond the scope of this article. Another article in my Dr Simple Science blog, titled “How Dangerous is Radiation to Humans—or is it?” explains how effective doses are converted to absolute cancer risk, as well as the many pitfalls of this process. So let us assume that these absolute cancer risks from radiation have been determined, and talk about equivalent risks from various non-radiation-related human activities Risks of non-radiation-related human activities compared to risks from radiation Without explaining how the following information was obtained (which is discussed in another of my Dr. Simple Science articles, titled “How Dangerous is Radiation to Humans—or is it?), let us assume that the probability of a single individual getting a fatal radiation-induced cancer due to an effective dose of 1 rem (roughly equivalent, for example, to a CT scan of the pelvis), is 0.05%. The corresponding non-radiation-related activities that carry the same actuarially determined risk of death are listed in table 3 below. Table 3. Non-radiation-related activities that carry the same actuarially determined risk of death as a single CT scan delivering an effective dose of 1 rem (10 mSv) BEHAVIOR / SITUATION CAUSE OF DEATH 1 rem (10 mSv) effective dose Induced cancer from radiation (assuming the LNT hypothesis) Smoking 28 packs of cigarettes Cancer, heart disease Drinking 200 liters of wine Cirrhosis of the liver Spending 400 hours in a coal mine Black lung disease Spending 1,200 hours in a coal mine Accident Living 2 years in New York or Boston Air pollution Traveling 40 hours by canoe Accident Traveling 70 hours by bicycle Accident Traveling 20,000 miles by car Accident Flying 400,000 miles by jet Accident Flying 600,000 miles by jet Cancer from cosmic radiation Living 7 years in Denver Cancer from cosmic radiation Living 17 years in a stone/brick building Cancer from natural radiation 500 chest x-rays or 20 mammograms Cancer from radiation Living 33 years with a cigarette smoker Cancer and heart disease Eating 1,600 tablespoons of peanut butter Liver cancer from aflatoxin-B Living 500 years at the boundary of a nuclear Cancer from radiation power-plant Drinking Miami water for 400 years Cancer from chloroform exposure Eating 40,000 charcoal broiled steaks Cancer from benzopyrene exposure [Source: http://www.med.harvard.edu/JPNM/physics/safety/risks/risklist.html] Included in the pie-chart in fig. 1. are slices of pie that are not part of naturally occurring radiation exposure. For example, there is a slice that corresponds to radiation from consumer products. Although, as already said, the latter is not part of natural radiation exposure (after all, we are not forced to use such products), it is quite interesting to consider which consumer products in fact do produce radiation exposure—however large or small. Radiation exposure from consumer products Cigarettes In addition to being subject to natural radiation as is the population at large, cigarette smokers receive, on average, an annual effective dose of about 1,300 mrem. No, that is not a typographical error. 1,300 mrem (13 mSv)! This is about 4 times the true natural effective dose of 360 mrem (3.6 mSv) per year. The reason for this perhaps puzzling fact is that the tobacco plant contains two naturally occurring radioisotopes, polonium-210 and lead-210. These radioisotopes actually originate in the fertilizer that is used in the growing of the tobacco plant. Subsequently, these two radioisotopes become trapped in tobacco smoke particles that are inhaled by smokers. Although the absolute concentration of the polonium-210 and lead-210 in tobacco smoke is very low, the tar in cigarette smoke traps the smoke particles in small passages in the lungs called bronchioles so that the polonium-210 and lead-210 remain in contact with the walls of the bronchioles for extended periods of time causing substantial radiation dose to be delivered to the cells of the bronchial walls. Furthermore, both polonium-210 and lead-210 are alpha particle emitters, and we have already explained why alpha emitting radioisotopes have a relative effectiveness for producing cancer that is 10 times higher than that of x-rays or gamma-rays. The average annual actual dose to the lining of the bronchioles of the average cigarette smoker (in contrast to the 1,300 mrem effective dose) is in fact about 10,000--11,000 mrem (100--110 mSv)! Since it is impossible to remove the polonium-210 and lead-210 from tobacco, it is not clear what proportion of the greatly elevated cancer rate observed in cigarette smokers is due to the chemicals present in tobacco and what proportion is due to the associated high radiation dose. Smoke detectors Most residential smoke detectors contain americium-241 radioisotope sources. Americium-241 is an alpha particle emitting radioisotope. With proper use, no significant radiation is measurable outside the unit, but if the smoke detector is trashed, the americium-241 source can fracture and surrounding objects may be contaminated, leading to the possibility of human contamination. Luminous watches and clocks Modern watches and clocks sometimes use small quantity of the radioisotope hydrogen-3 (called tritium) or promethium-147 as a source of luminosity. Some older watches and clocks, right up to the 1960s, used radium-226 as a source of luminosity. As mentioned earlier, radium-226 radioactively decays by producing radon gas, which could be inhaled. Furthermore, if these watches or clocks are trashed, the radium-226 would contaminate surrounding objects and contaminate anyone handling these objects. In the early part of the 20th century, workers who painted the numerals on the dials of luminous watches and clocks were known to lick the ends of their paint brushes to make a nice sharp point; but in doing so, they absorbed dangerous amounts of radium-226, and many of them developed cancers as a result. Pottery Ceramic materials, such as tiles and pottery, and in particular orange-colored Fiesta-Ware, often contain elevated levels of naturally occurring radioactive uranium, thorium-232, and/or potassium-40. In most cases, the radioactivity is concentrated in the glaze. While it is less common than it once was, some brands of lantern mantles incorporate thorium-232. In fact, it is the heating of the thorium by the burning gas or liquid that is responsible for the emission of light. Such mantles are sufficiently radioactive that when discarded they are often used as check sources for radiation survey meters. Glassware Glassware, especially antique glassware with a yellow or greenish color, can contain detectable quantities of uranium. Such uranium-containing glass is often referred to as canary or vaseline glass. Collectors are also attracted to uranium glass for the attractive glow that is produced when the glass is exposed to black (ultraviolet) light. Even ordinary glass can contain high enough levels of potassium-40 or thorium-232 to be detectable. Older camera lenses (1950s-1970s) often had coatings of thorium-232 to alter the index of refraction. Antique radioactive “curative” devices In the past, primarily 1920s through the 1950s, a wide range of radioactive products were sold as curative devices. For example, radium-containing pills, pads, solutions, and devices designed to add radon to drinking water. Most such devices were relatively harmless (as well as being useless), but occasionally one can be encountered that contains potentially hazardous levels of radium-226. Fertilizers Commercial fertilizers are designed to provide varying levels of potassium, phosphorous, and nitrogen. Such fertilizers can be measurably radioactive for two reasons: potassium is naturally radioactive due to its radioisotope potassium-40 (as explained earlier in the section on internal natural radiation), while the phosphorous can be derived from phosphate ore that contains elevated levels of uranium-238 and radium-226. The radioactivity of fertilizers is most important due to the polonium-210 and lead-210 that is transferred to plants, in particular the tobacco plant, which (as explained earlier) can be highly hazardous to smokers. Food Food contains a variety of different naturally occurring radioactive materials. Although the relatively small quantities of food in a home at any one time contain too little radioactivity to be readily detectable or hazardous, bulk shipments of food have been known to set off the sensitive radiation monitors at border crossings. One exception would be low-sodium salt substitutes that often contain enough potassium-40 to double the level of natural radioactivity. Granite countertops Beautiful granite countertops are radioactive to a small extent. The granite continually releases radon-222 gas into the air due to the presence of radium-226 in the granite. Although the amount released can vary considerably from one type of granite to another, the radon concentrations in most kitchens tested are much less than the EPA's "safe" limit of 4 picoCi/liter. While the radioactive material in the granite can produce a reading on a sensitive radiation detection instrument, the levels of radiation are well below the level that would result in any harm to humans; so don't replace your granite countertops on account of the minuscule quantities of radon produced, but develop a geeky party patter to tell others about it! Long-haul airline flights Long-haul airline flights cause the passengers and cabin staff to incur higher cosmic radiation doses than the rest of us who remain on terra firma. This occurs for two reasons: first, at cruising altitudes of 30,000-40,000 ft, there is very little atmosphere left to shield the traveller from cosmic rays; second, much of the cosmic rays are deflected by the earth’s magnetic field before they reach ground level, but near the poles the magnetic fields are in an unfavorable orientation to provide optimum deflection of cosmic rays, and since long-haul flights often cross over the poles to exploit the shorter distance of the great circle routes, the pilots, crew, and passengers get double-indemnity with regard to increased radiation levels. The lowest dose-rates measured during a long-haul flight are approximately 0.3 mrem/hour (3 µSv/hour) during a Paris-Buenos Aires flight totaling approximately 13 hours, resulting in a round-trip additional effective dose of 8 mrem (80 µSv). The highest dose-rates measured during long-haul flights are approximately 0.66 mrem/hour (6.6 µSv/hour) on Paris-Tokyo flights totaling approximately 12 hours, and 1 mrem/hour (9.7 µSv/hour) on the same route in the Concorde, totaling approximately 4 hours. For the Paris-Tokyo flight, this would result in a round-trip additional effective dose of 16 mrem (160µSv); or 8 mrem (80µSv) in the Concord. For long-haul pilots, who typically fly 700-1,000 hours a year, these additional cosmic ray exposures could add roughly 400-600 mrem/year (4-6 mSv/year) to their “ground-based” natural exposure average of 360 mrem/year (3.6 milliSv/year). [Adapted from reference: https://www.hps.org/publicinformation/ate/faqs/commercialflights.html] Summary In this article we have discussed the sources of natural radiation which we are all exposed to whether we choose to be or not. These are, terrestrial, cosmic, internal, medical, & radon inhalation. Percentage-wise, these constitute, respectively, 8% (terrestrial), 7% (cosmic), 11% (internal), 15%, (medical) and 55% (radon inhalation), for a total annual effective dose of 360 mrem (3.6 mSv). Expressing doses as effective doses does away with any need to make corrections for radiation type or exposure geometry and other exposure conditions; effective doses can then be directly plugged into radiation dose vs. cancer risk models such as the frequently used linear-no-threshold (LNT) model. The substantial annual radon inhalation component (55%) in the natural effective dose is due to a number of factors, but most importantly to the radiobiological properties of radon-222 as an alpha particle emitter and the associated high quality factor of 10. Long-haul pilots, crew, and passengers are typically exposed to additional effective doses of approximately 0.5 mrem/hr (5µSv/hr), due mainly to the reduced protection against cosmic rays from the decreased atmospheric protection at high cruising altitudes and reduced protection from the earth’s magnetic field on great-circle routes over the poles. Many consumer products are manufactured with radioisotopes of various kinds as necessary components of the product. During normal use, these consumer products are completely safe, but following their disposal there is frequently the possibility of hazardous contamination. Tobacco smokers, on average, incur an additional annual effective dose of 1,300 mrem (13 mSv), due to polonium-210 and lead-210, both alpha-particle emitting radioisotopes, that are absorbed from fertilizer by the tobacco plant and become trapped in bronchioles by the tar in the cigarette smoke. This maintains these radionuclides in intimate contact with the lining cells of the bronchioles for extended periods of time, and with the high quality factor of 10 for alpha particles, produces actual doses as high as 10,000—11,000 mrem (100-110 mSv). But due to the inability to remove the polonium-210 and lead-210 from fertilizer, it is impossible to conclude whether the high incidence of cardiovascular disease and lung cancer observed in smokers is due to the presence of polonium-210 and lead-210 or to other factors connected with smoke inhalation. Some radiobiological studies have shown decreased incidence of cancer with increasing effective dose in the range of 0 - 10 rem (0 - 0.1 Sv). This inverse relationship is called radiation hormesis. The scientific facts explaining radiation hormesis are being gradually elucidated, and it appears that the existence of a radiation hormetic effect in the range of typical diagnostic x-ray doses is real.  An implementation of the x-ray backscatter technique that is a modification of the one described in this blog has been developed by American Science and Engineering. Rather than designed for detecting threat objects on airline travelers, which this narrative is focused on, this new implementation is scaled-up to examine the contents of cargo containers; for example, shipping containers and trucks. The backscatter system is scaled-up by using much higher energy x-rays, plus other modifications, to enable the x-rays to penetrate into much larger objects. Instead of conventional "diagnostic" x-ray machines, this device uses linear accelerators, the same technology that is used for radiation treatment of cancer, and which produces x-rays of approximately 10-20 times higher energy than the airport threat detection devices described in this blog. The picture above shows a truck that attempted to cross the border to the United States. A simple physical examination suggested that the truck contained bananas. However, the center of the cargo area contained a compartment within which 20 or so illegal aliens were attempting to cross the border undetected. The x-ray backscatter image showed the human cargo very clearly. Introduction